Working on side projects to get experience and show your work is a good idea to practice data engineering. You might be eager to look for side projects as a new and experienced data engineer. Those side projects could initiate impressive discussions to help you land a dream job. This article will introduce 6 data engineering side project ideas regardless of your experience.

You can also list those side projects on your resume to get more attraction and some talking points during an interview. Many data engineers add a “projects” section to their resumes. Those projects indicate candidates practice data engineering from their work and work on something interesting related to the interview outside the regular working routine.

1. Build a Real-time Streaming Data Processing Pipeline

Example: Storm Live Streaming Meetup Data, Elastic Search, and Kibana DashBoard (Meetup Event Feed)

Building a real-time streaming data pipeline is challenging at scale in production. However, maintenance is usually less emphasized than its feature for a side project (actual interviews might focus on the maintenance of the streaming data pipeline). The more frequently asked questions of a real-time data pipeline during an interview are the following:

- The data processing architecture (it would be great if you could draw a diagram) + use case (why you need to build streaming vs. batch)

- Data volume

- Processing latency (how to handle late arrival data)

To build a modern data streaming pipeline, you will work with frameworks like Kafka and Pulsar, which are used as the message bus to create a real-time streaming data pipeline. Beam, Flink, Spark Streaming, and Storm are usually used for data processing.

The bonus is to build fancy data visualization to show on digital signage. It is cool to watch while the data processing running the data keeps updating.

Where to find the free data source for your streaming project? Finding an accessible data source is the first big step. Here are some public data streaming feeds I found helpful:

-

- Meetup event feed (the data source I used to build the data streaming pipeline)

- Wikipedia Feed

- Weather Feed

- Bike Sharing Data Feed (I make another application with this data source)

2. In-Depth Analytics on A Dataset

Example: Frontpage Slickdeals Data Analysis with Pandas and Plotly Express

One of the critical skills for a successful data engineer is analyzing the given dataset and drawing insights. It can range from validating a small entry to the KPI of projects. The analytics projects on a resume will drive your interviewer’s attention to ask further Follow up questions

It becomes much easier to get a large public dataset than before. kaggle.com makes it easier for competition and enables access to the dataset public and sharing analysis online. You can also share your research and get feedback from the Kaggle community. To have an analysis project listed on your resume, you’d need to be prepared to answer the following questions:

- What is the data model of your dataset? Please draw an ER (entity-relationship) diagram.

- What tools do you use for analysis?

- What insights did you gain from your analysis?

There are some famous public datasets that you can use SQL/Python/R for in-depth analysis:

3. Build a Web Crawler

Example: How To Detect The Best Deals With Web Scraping (with Raspberry Pi and Scrapy)

Crawling websites is a helpful skill for data engineers to understand how to extract unstructured data. There are many information pages that you can build crawlers with a CRON job or Apache Airflow to periodically pull the latest data and store data for later analysis. Many businesses are built on top of crawling, taking a snapshot of data online and allowing users to access those historical data. Some candidates for crawling projects like:

And also Top 10 Most Scraped Websites would give you some ideas to start with. Once you have data scrapped, you will need to build an ETL pipeline to transform and eventually load data into storage. This process further enhances your data engineering skills.

4. Build Data Pipeline For Recommendation System

Example: Tom’s News Recommender — personal news recommendation system

Recommender systems are ubiquitous in every online experience to drive a better user experience. Recommender systems are used in diverse industries, including online shopping, streaming services, music, news, books, searching, and social media. Although data engineers sometimes won’t build the modeling, the machine learning pipeline is traditionally engaged by data engineers. Some helpful datasets for building a machine learning model:

5. Build a Data Warehouse

Example: IMDB-Data-Warehouse-Management-and-Business-Intelligence

Data engineers must have a solid understanding of data warehouse or OLAP (online analytical processing). Many data engineering daily tasks involved designing, developing, and querying data warehouses to get business insights. The Data Warehouse Toolkit: The Definitive Guide to Dimensional Modeling, 3rd Edition by Ralph Kimball, is the definitive guide to start. It includes real-life examples of various industries for building the data warehouse.

The data warehouse projects can be built on top of the analytics dataset we have seen before, but you can apply the dimensional modeling concept with the new design. The project’s output includes ER diagrams for the data warehouse, ETL development to extract from raw data, transform the dataset, and load data into a queryable format.

6. Develop a Game with Streaming Data (A superb alternative visualization)

Example: Pac-Man Game with Streaming Data

I have built the Pac-Man game with a streaming data feed is a superb alternative way to interact with users and visualize data. The game is interactive with live streaming data with users’ input.

There are a couple of components you need to accomplish this:

- Build a streaming layer to store the data

- Build the game and think about how you’d want to leverage the streaming data

- Stitch the streaming data as input to feed the elements in the game

The game is changing how dots are displayed during the Pac-Man game. The dots are the physical location on the map of the Meetup events pulled from the meetup streaming feed. Once the Pac-Man eats a dot, the banner displays the name of that Meetup event. The game is an engaging way for users to understand where the Meetup event is happening worldwide.

I wrote an article on detail of how I built this data with streaming data, read more about it:

I Built a Game By Using Streaming Data: A Fun Way for Data Visualization

Final Thoughts

The project I mentioned above usually takes 1–2 weeks of your spare time to accomplish a side project. It is good to show your passion for data engineering on your resume. You don’t need to limit yourself to the options I listed above. Be creative and bold; the world is data-driven, and so many data-related opportunities await you to explore. I hope this article can inspire you to build your unique side project and enhance your data engineering skills.

About Me

I hope my stories are helpful to you.

For data engineering post, you can also subscribe to my new articles or becomes a referred Medium member that also gets full access to stories on Medium.

More Articles

The Data Modeling Wars: Inmon vs. Kimball vs. Data Vault

Confused by data modeling? We break down the key differences between Inmon, Kimball, and Data Vault architectures so you can choose the right strategy for your data warehouse.

Apache Spark 4.1 is Here: The Next Chapter in Unified Analytics

Apache Spark 4.1 is here. Discover how Real-Time Mode (RTM), Declarative Pipelines, and Arrow-Native UDFs are transforming data engineering and PySpark performance

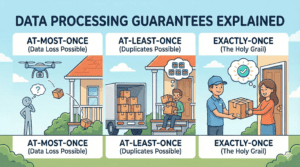

Data Processing Guarantees Explained: Exactly-Once, At-Least-Once, and At-Most-Once

Learn the difference between data processing guarantees (At-Most-Once, At-Least-Once, Exactly-Once) with simple real-world examples. Perfect for data engineering beginners